CyberTipline Report

2024 marked 40 years of operation for the National Center for Missing & Exploited Children. Over the past four decades, NCMEC has continuously confronted evolving threats against children and worked with law enforcement, legislators, industry, survivors and their families and others to create and implement solutions to keep children safe online.

NCMEC's CyberTipline was created in 1998 to receive reports of suspected child sexual exploitation from the public and electronic service providers (ESPs). Through this work, we support law enforcement efforts to stop child sexual exploitation and abuse and provide services to combat the harmful circulation of child sexual abuse material (CSAM).

This report includes data from reports made to the CyberTipline in 2024 and reflects the ever-changing nature of the threats against children and the landscape of online child protection.

Reports

In 2024, the CyberTipline received 20.5 million reports of suspected child sexual exploitation. While this is a significant number, the overall number of reports declined from 36.2 million reports received in 2023.

One positive reason behind this change is the implementation of a new CyberTipline feature that allows platforms to “bundle” related reports to streamline reporting of widespread incidents, such as viral meme content. Bundled reports still contain information on every reported user and incident, but they consolidate incidents tied to a single viral event into a single or smaller set of reports, reducing redundant submissions. In 2024, the bundling feature was implemented by Meta, which has often been one of the largest reporters to the CyberTipline, and this improvement contributed to some of the decline in overall reports.

However, when those 20.5 million reports, including the bundled reports, are adjusted to reflect reported incidents, we see that 29.2 million separate incidents of child sexual exploitation were submitted to the CyberTipline in 2024. A comparison of the 29.2 million incident number for 2024 to its corollary of 36.2 million reports in 2023 demonstrates that online platforms reported approximately 7 million fewer incidents to the CyberTipline last year. This decline is especially concerning because the REPORT Act, which was enacted in 2024, mandates companies to report two additional forms of child sexual exploitation for the first time – child sex trafficking and online enticement.

With additional mandatory reporting, we would have expected increased reporting, but our analysis identified multiple contributing factors to the decline, including a decrease in reports from certain platforms and a decline caused by further implementation of end-to-end encryption (E2EE). NCMEC has long raised concerns about implementing E2EE without an exception for detecting child sexual exploitation.

Despite this overall decline in reports, the CyberTipline continued to see an influx of time-sensitive reports involving children at risk for imminent harm and requiring urgent response and manual review. In 2024, NCMEC received an average of 50 reports a day that were marked as urgent by the electronic service providers (ESPs). Additionally, NCMEC systems alerted our staff to an additional 1,400 reports per day that contained chats, files, or other information indicating the report was potentially time-sensitive. Manual analysis of these and other reports resulted in NCMEC identifying and escalating for law enforcement more than 51,000 reports that were urgent or involved a child in imminent danger.

Other trends from 2024 addressed in this report include the continued global nature of child sexual exploitation online with 84% of reports resolving outside the U.S. and the identification of emerging threats with increased reporting related to generative artificial intelligence, online enticement and sadistic online exploitation.

Reporting Categories

The CyberTipline receives reports related to multiple forms of child sexual exploitation with child sexual abuse material being the largest reporting category and including reports related to the possession, manufacture, and distribution of sexually exploitative content.

In 2024, we continued to see an alarming increase in reports of online enticement, which has been rising for the last several years. This crime involves an adult communicating with a child for sexual purposes and includes sextortion. In 2024, NCMEC received more than 546,000 reports concerning online enticement - a 192% increase compared to reports in 2023. NCMEC anticipates this volume will continue to grow as more companies fulfill their reporting obligations under the REPORT Act.

The increased volume of child sex trafficking reports is also potentially an early result of the REPORT Act. In 2024 there were 26,823 reports - a 55% increase from 2023.

| column |

0

|

| Total | 0 |

Images, Videos and Other Files

Reports made to the CyberTipline by ESPs can include images, videos and other files related to the child sexual exploitation incident being reported. The majority of reported files are related to suspected CSAM.

In 2024, reports contained 62.9 million images, videos and other files related to the child sexual exploitation incident being reported.

Emerging Threats

NCMEC leverages CyberTipline reports to identify new and evolving threats facing children online, helping inform the collective efforts of multiple agencies and organizations around the world to protect children online. Some trends that stood out in 2024 include:

Online Enticement

The continued rise in online enticement reports is fueled in part by the crime of sextortion, which involves an offender attempting to coerce sexual content, sexual activity or money from a child by threatening to share nude or sexually explicit images of the child. In many recent cases of financial sextortion, boys are being targeted by offenders who often use fake social media accounts to convince the boys to send them an image and then immediately begin demanding money. In 2024, NCMEC received nearly 100 reports of financial sextortion a day and since 2021, NCMEC is aware of more than three dozen teenage boys who have taken their lives as a result of being victimized by this crime.

Generative Artificial Intelligence Technology (GAI)

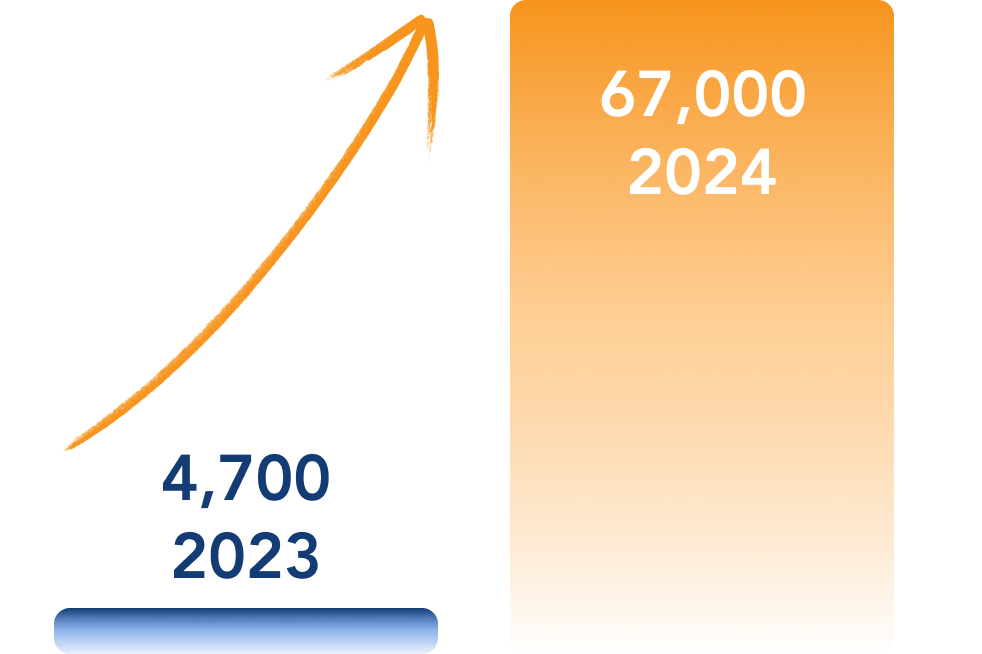

While AI can be very useful, many widely available generative AI tools can be weaponized to harm children, and the technology's use in child sexual exploitation has increased. This technology can be used to create or alter images, provide guidelines for how to groom or abuse children or even simulate the experience of an explicit chat with a child. In 2024, NCMEC's CyberTipline saw a 1,325% increase in reports involving Generative AI, going from 4,700 last year to 67,000 reports in 2024.

in reports involving GAI

Sadistic Online Exploitation

There has been an increase in reports involving sadistic online exploitation by violent online groups that encourage children to harm themselves and others, including cutting; creating CSAM and sexually exploiting other children (including their own siblings); harming animals; committing murder; and taking their own lives. In 2024, NCMEC's CyberTipline received more than 1,300 reports with a nexus to a violent online group – a more than 200% increase over last year. The majority of these reports – 69% — have come from the public. In several cases, parents and caregivers have reported after they learn of their child's self-harm and/or suicide attempt. This highlights gaps in the detection, disruption and reporting of this abuse by electronic service providers.

over last year

Electronic Service Provider Reports

The CyberTipline receives reports from the public and online electronic service providers (ESPs) with the majority coming from ESPs. To date, more than 1,900 ESPs are registered to make reports, and 24% of these are non-U.S.-based companies that have voluntarily registered to report to the CyberTipline. In 2024, only 296 companies submitted CyberTipline reports and 10 ESPs accounted for more than 95% of the reports.

Changes in ESP reporting played a significant role in the overall decline in the number of reports submitted to NCMEC in 2024. Some key contributing factors other than Meta's use of the bundling tool included:

Meta and Google removed several images from their CyberTipline process that violated terms of service but did not meet the legal definition of child pornography.

Several online platforms with a history of consistent reporting to the CyberTipline submitted far fewer reports in 2024. These platforms, including Google, X, Discord, Microsoft and Synchronoss, reported 20% fewer reports in 2024 than they did in 2023.

reports from 2023

Facebook implemented end-to-end encryption. Meta began to implement default E2EE in Facebook Messenger in December 2023 and completed that process in the summer of 2024.

U.S.–based ESPs are legally required to report instances of child sexual abuse material (CSAM) to the CyberTipline when they become aware of such content on their platforms. However, there are no legal requirements regarding proactive efforts to detect CSAM or what information an ESP must include in a CyberTipline report. As a result, there are significant disparities in the volume, content and quality of reports that ESPs submit.

The relatively low number of reporting companies and the poor quality of many reports highlights the continued need for action from Congress and the global tech community. The ability to protect children on their platforms will require action from the global tech community to enhance detection and reporting practices as well as action from legislators to create new laws to better protect children from exploitation online.

Public Reports

reports from members of the public regarding suspected child sexual exploitation.

In addition to ESP reports, the CyberTipline received 164,497 reports from members of the public regarding suspected child sexual exploitation. In 2024, an increased percentage of public reports to the CyberTipline came from survivors or someone close to them as opposed to someone who witnessed something online but didn't know the victim.

submissions to Take It Down.

In addition to the CyberTipline, NCMEC also offers Take It Down as a free service that helps victims and survivors of online CSAM remove from the internet nude, partially nude or sexually explicit photos and videos taken before they turned 18. Take It Down works by assigning a unique digital fingerprint – called a hash value – to the nude, partially nude or sexually explicit content. Online platforms can use Take It Down's list of hash values to detect reported images on their services allowing them to remove the content. In 2024, NCMEC received more than 83,000 submissions to Take It Down including more than 166,000 hashes.

languages translated.

In 2024, Take It Down was translated into eight additional languages including Danish, Greek, Hindi, Hungarian, Japanese, Polish, Romanian and Ukrainian. It is now available in 33 languages and is promoted to potential users around the world. The Take It Down website recorded more than 1.6 million visitors and more than 4 million page views. The majority of website visitors were based in the U.S. with India, Brazil, Turkey and Mexico making up the other countries with the most web visits to Take It Down.

CyberTipline

Report Response

NCMEC's response to CyberTipline reports helps stop child sexual exploitation and the spread of CSAM online by making critical information available to law enforcement and online platforms. This includes making the reports and our additional analysis available to law enforcement to help them prioritize the most urgent cases; and providing resources and notification services to companies to help them locate and remove content on their platforms. The elements of that response in 2024 are outlined below.

Referrals and Informational Reports

NCMEC categorizes CyberTipline reports based on the quantity and quality of information included and whether it can help law enforcement take action:

A referral is a report in which the tech company provides sufficient information for law enforcement, usually including user details, imagery and a possible location.

An informational report is one in which the tech company provides insufficient information or where the imagery is considered viral and has been reported many times.

Reports without adequate information cannot help a child, and they can actually slow down the ability to reach children who need help by creating a larger volume of reports that must be addressed.

NCMEC notifies companies when their reports consistently lack substantive information.

In 2024, more than 8% of CyberTipline reports submitted by the tech industry contained so little information that it was not possible for NCMEC to determine where the offense occurred or the appropriate law enforcement agency to receive the report. Among companies that made at least 100 reports in 2024, more than half of the reports submitted by the companies listed below lacked adequate information to determine a location.

- Amazon AI Services

- Anthropic

- Aylo Freesites Ltd (dba Pornhub)

- Bdsmlr

- Bluesky PBC

- Box, Inc.

- gayboystube

- Grindr

- Internet Archive

- Invoke AI, Inc.

- Lightspeed Systems

- Matrix.org Foundation

- Reblogme

- Redgifs.com

- Streamable, Inc

- Wikimedia Foundation Inc.

- Zoom Video Communications, Inc

File Review and Triage

Child sexual abuse images and videos are often circulated and shared online repeatedly. CSAM depicting a single child victim can be circulated for years after the initial abuse occurred. For example, one child's abusive imagery has been circulated for the past 19 years appearing more than 1.3 million times in submissions to NCMEC. One of the CyberTipline's critical functions is to identify unique images among the files included in reports through the work of NCMEC staffanalysts and the use of technology. In 2024, ESPs submitted 28 million images to the CyberTipline of which 12.4 million (44%) were unique. Of the 33.1 million videos reported by ESPs, 8.1 million (25%) were unique.

NCMEC analysts review suspected CSAM submitted by companies and label images and videos with information about the type of content, the estimated age range of the children seen and other details that help law enforcement prioritize the reports for review. For example, labels can indicate if the imagery contains elements like violence or bestiality or if it involves infants or toddlers.

After labeling files, NCMEC's systems use robust hash matching technology to automatically recognize future versions of the same images and videos reported to the CyberTipline. The automated hash matching process reduces the amount of duplicative CSAM that NCMEC staff view and focuses their attention on newer imagery. This process helps ensure the most urgent CyberTipline reports, where a child may be suffering ongoing abuse, get immediate attention.

Hash Sharing

Hash values are unique digital fingerprints assigned to pieces of data, such as images and videos. They are an important tool in the effort to stop the spread of CSAM. When an image or video is identified as containing CSAM and has been reviewed and confirmed at least three times by NCMEC staff, NCMEC adds its hash value to a list that is shared with technology companies.

On a voluntary basis, companies can elect to use NCMEC's hash list to detect CSAM on their systems so that abusive content can be reported and removed. As of December 31, 2024, NCMEC shared more than 9.8 million hashes with 55 ESPs and 17 non-traditional ESPs who have voluntarily chosen to access this hash-sharing initiative.

Removing Content

When CSAM is reported by around the world, NCMEC can provide crucial support to child victims by notifying relevant platforms to review and remove any explicit images of the child.

NCMEC staff review the reported imagery and if it falls in one of the three categories below, a notification is made to the ESP where the image or video is located:

Based on a company's terms of service, imagery may be removed and/or users blocked in response to a notification. Once a notice has been sent, NCMEC staff track the status of each case and continue to generate additional notices until the content is addressed.

Domestic and

Global Response

A critical function of the CyberTipline is to refer reports to the law enforcement agency that is best able to respond to and address the issue being reported. Federal statute 18 USC 2258A requires U.S. companies to report to the CyberTipline if they become aware of suspected CSAM on their platforms and servers. Because these companies have users worldwide and those incidents are reported to NCMEC, by extension the CyberTipline serves as a global clearinghouse and reports are referred to law enforcement throughout the U.S. and around the world.

Domestic Response

While the majority of reports submitted to the CyberTipline resolved outside of the United States, more than 1 million reports resolved to a U.S. State. Of these, 968,717 reports resolve to a specific state and for 209,041 the state was unknown. The CyberTipline reports are made available to Internet Crimes Against Children Task Forces and other local, state and federal agencies. When the state is unknown, the reports are made available to federal law enforcement in the U.S.

NCMEC works closely with law enforcement throughout the U.S. providing training and resources to support their response to CyberTipline reports.

| col | |||

|---|---|---|---|

| col | 0 | 0 | 0 |

| Grand Total | 0 | 0 | 0 |

Global Response

With more than 84% of reports being referred outside the U.S., NCMEC has forged partnerships with law enforcement in 167 countries and territories that receive CyberTipline reports, including Interpol and Europol. Interpol also assists in the dissemination of CyberTipline report information to certain countries where NCMEC doesn't have a direct connection to law enforcement. These important connections to law enforcement allow for a quick and seamless referral of CyberTipline reports to help ensure children around the world are safeguarded and offenders are held accountable. A report referred to a single country based on where the content may have been uploaded can still have a nexus with other offenders or victims located in other countries around the world, including the U.S.

With private and public/federal support, NCMEC staff provide CyberTipline trainings in other countries, mentor NGOs seeking to expand technical and operational capacities within their own hotlines, educate on best practices and share child safety and prevention material around the world. We collaborate with dozens of global NGOs, including WeProtect, ECPAT, International Justice Mission (IJM), Internet Watch Foundation, the Canadian Centre for Child Protection, UNICEF and many others. NCMEC is also a founding member of INHOPE, a global network of 50 member hotlines across six continents.

| col | |||

|---|---|---|---|

| col | 0 | 0 | 0 |

| Grand Total | 0 | 0 | 0 |

Case Management Tool

The NCMEC Case Management Tool (CMT), developed with support from the U.S. Office of Juvenile Justice and Delinquency Prevention (OJJDP) and Meta, enables NCMEC to share reports securely and quickly with law enforcement around the world.

The CMT allows law enforcement in the U.S. and abroad to receive, triage, prioritize, organize and manage CyberTipline reports. Through robust and customizable display data, dashboards and metrics, law enforcement personnel can tailor their report queue for more immediate triage and better response.

The CMT also helps police agencies refer reports to other law enforcement agencies for a more targeted response. The system helps NCMEC notify law enforcement of high priority reports.

In support of easier adoption and use by international users, the CMT fields and interface are available in eight languages (English, Spanish, French, German, Portuguese, Arabic, Hindi and Thai) with additional translations planned. Domestically, all Internet Crimes Against Children Task Forces, the FBI and Homeland Security Investigations also have access.

OJJDP CyberTipline Report

In consultation with the Office of Juvenile Justice and Delinquency Prevention (OJJDP), NCMEC prepared an additional transparency report regarding CyberTipline activity in 2024. It is a complementary resource to the report on this page and contains additional detail about the reports made to the CyberTipline in 2024.

2024 CyberTipline Report

Archives